How to Use Seedance 2.0: Beginner FAQ, Prompting Tips, and Troubleshooting

Seedance 2.0 gets much easier once you stop trying to learn everything at once.

Most beginners do not need all four input types, a perfect prompt structure, and a cinematic concept on day one. What helps is understanding what the tool is good at, what each reference type does, and how to fix the most common mistakes without starting over every time.

This article answers the questions that usually come up in the first few sessions.

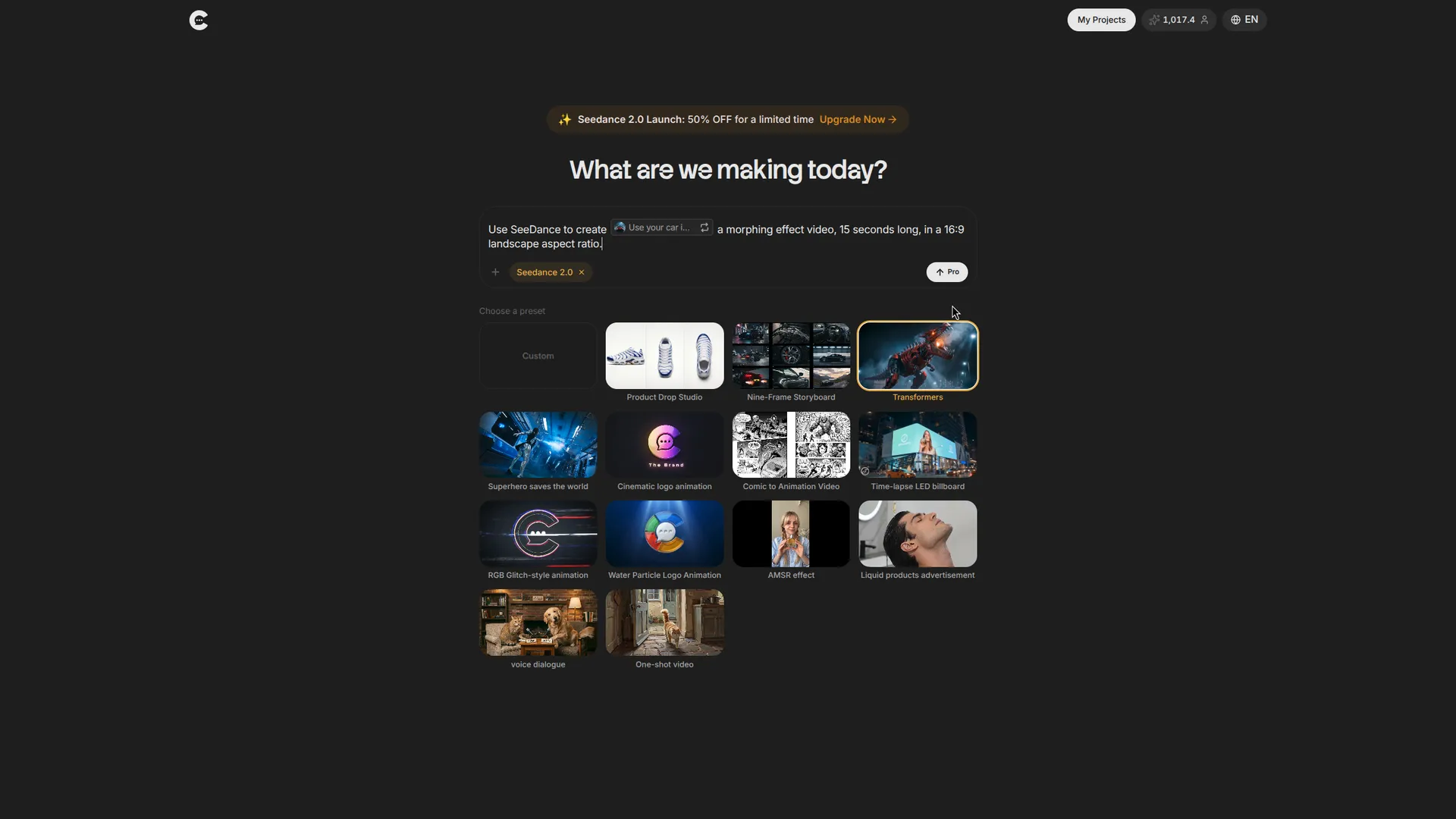

What Is Seedance 2.0?

Seedance 2.0 is a multimodal AI video model that can work with text, images, audio, and video references. Its biggest advantage is not just that it makes video, but that it lets you shape video with more than text alone.

That is why it can feel unusually controllable once the prompt logic clicks.

If you want the deeper breakdown of how that logic works, start with the full Seedance 2.0 prompt guide.

Can a Beginner Use Seedance 2.0?

Yes. The easiest mistake is assuming you have to master every advanced feature before the tool becomes useful.

You do not.

A much better way to learn is:

- start with one image and one sentence

- look at the result closely

- identify what is off

- add only the missing instruction

That loop teaches you faster than reading ten prompt threads and trying to memorize all of them.

Should I Start With Image-Only or Full Multimodal?

Start with the lightest workflow that can express your idea.

If you only have one image and want a simple shot with mood and motion, image plus text is usually enough.

If your idea depends on:

- a specific camera move

- a very particular rhythm

- a borrowed sound mood

- more stable subject consistency

then use the full multimodal workflow and bring in video or audio references.

Simple idea, simple setup. Complex idea, richer references.

I Only Have One Image. Can I Still Generate a Video?

Yes. That is one of the cleanest ways to start.

Take one strong image and write what changes over time:

Even with one image, timing matters. A single image plus a strong timeline prompt is usually better than three vague references and no real direction.

I Have a Video and Want Its Camera Movement. How Do I Use It?

Upload the clip and state clearly what it is doing for the prompt.

Good:

Weak:

The model needs to know whether the video is teaching motion, rhythm, atmosphere, or content. In most cases, camera and pacing are the most valuable jobs for a reference clip.

I Do Not Know How To Write Prompts. Where Should I Start?

Start by describing only four things:

- what the viewer sees

- what changes

- when it changes

- how the camera behaves

That is enough for a usable first version.

For example:

You do not need poetic language. You need specificity.

Why Does the Result Look Nothing Like What I Imagined?

Usually because the prompt is too broad for the amount of precision you want.

Make a beautiful video is not a direction. It is a wish.

If the result is off, diagnose it:

- wrong subject = strengthen subject references

- wrong camera = strengthen video reference or camera instruction

- wrong pacing = add timestamps or beat cues

- wrong mood = add clearer lighting, palette, or audio language

Fix the broken part, not the entire prompt.

Why Does the Character Change From Shot to Shot?

Because one image rarely locks identity well enough across multiple beats.

If consistency matters, use:

- front view

- side view

- outfit reference

- clear text about what must stay stable

Think of it less as one perfect image and more as a packet of identity information.

How Do I Keep One Continuous Shot Instead of Random Cuts?

Say it directly.

If you do not tell Seedance to avoid cuts, it may cut. The model is trying to make editorial decisions unless you remove that freedom.

Useful phrases:

one continuous takeno cutsno jump cutsuninterrupted camera movementkeep the action fluid from start to finish

If the action should accelerate, stop, or drift, describe that too. Continuous does not mean static.

How Do I Recreate a Movie-Style Shot Without Copying the Scene?

Split the instruction in two:

- what to borrow

- what to generate

Example:

That structure is what keeps the result feeling inspired by the reference rather than trapped inside it.

How Do I Make the Video Hit the Beat?

Use sound or rhythm references to control timing, and use image or scene instructions to control content.

The simplest formula is:

If the beat matters, do not leave timing implied. Make it part of the brief.

Can I Continue a Clip Instead of Starting Over?

Yes. Seedance can extend existing footage.

The important rule is that the duration you choose should be the added section, not the final combined runtime.

If you have a 9-second clip and want 6 more seconds, set the extension to 6 seconds and describe only the new material.

That keeps the continuation clean and readable for the model.

When Should I Use More References Instead of Better Writing?

Use more references when the missing information is hard to describe in text.

That usually means:

- camera movement

- motion rhythm

- product or character consistency

- exact sound mood

Use better writing when the missing information is narrative, timing, or constraints.

That usually means:

- action order

- dialogue

- what should stay on screen

- what should not happen

If text feels like the wrong tool for the job, it probably is.

Final Thought

Seedance 2.0 gets much less intimidating once you stop thinking in terms of perfect prompt and start thinking in terms of clear assignment.

Tell the model what each asset is doing. Tell it when the beats change. Tell it how strict the shot should be. Then revise only what failed.

For more advanced workflows, camera replication, editing, and multimodal examples, read the full Seedance 2.0 Prompt Guide.