Free GPT Image 2: Every Access Point Compared Honestly

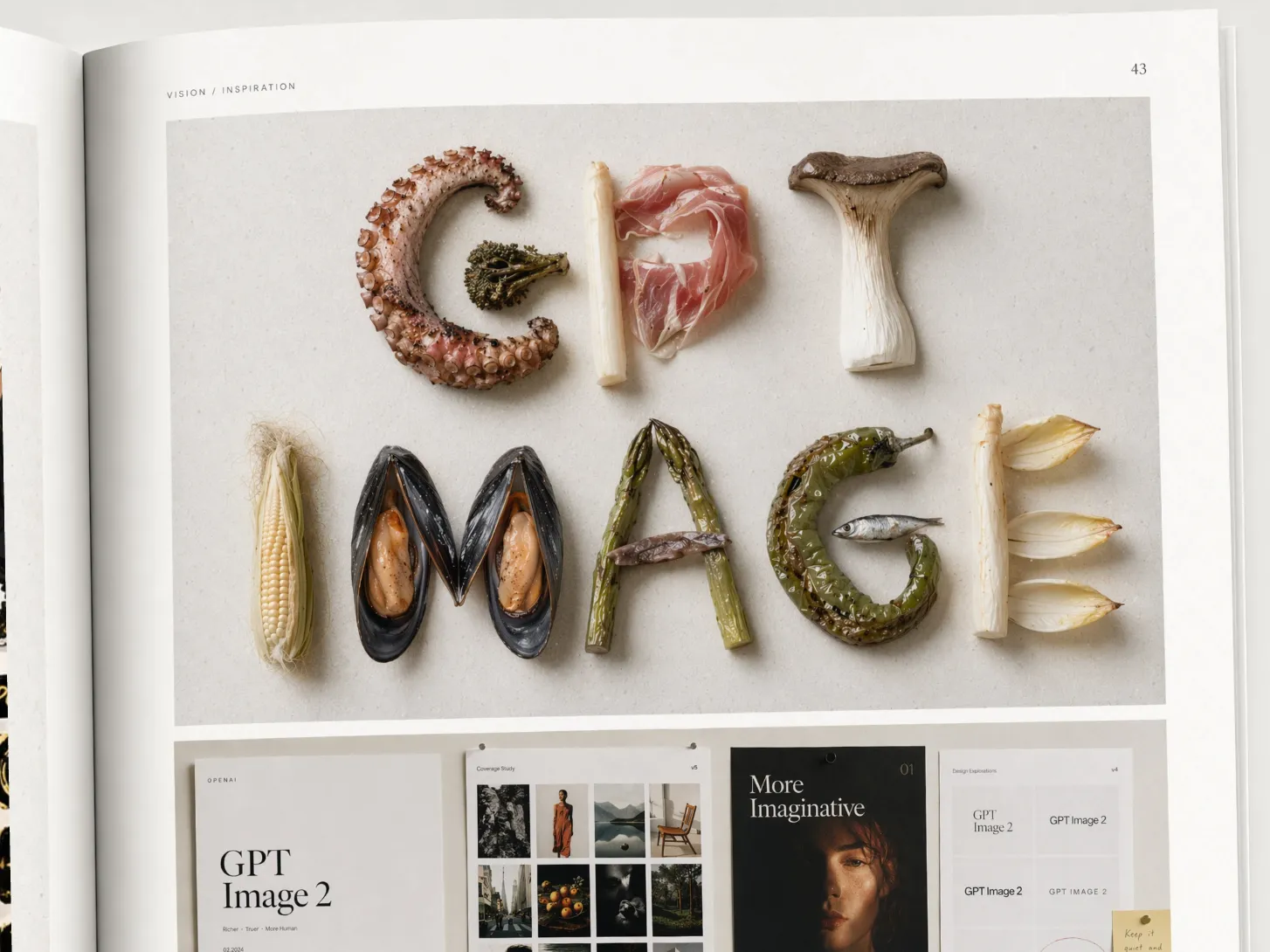

GPT Image 2 is OpenAI’s most capable image generation model to date, and it’s genuinely impressive: photorealistic outputs, near-perfect text rendering across multiple languages, and instruction-following that actually matches what you described. If you’ve ever tried to put legible words inside an AI-generated image and gotten back garbled nonsense, you’ll understand why that last point matters.

Here’s the tension, though. “Free GPT Image 2” doesn’t mean the same thing everywhere.

Some platforms require an account. Some cap you at a handful of generations per day. Some quietly restrict commercial use in their terms of service, which matters the moment you put that image in a client deliverable or a product ad. According to OpenAI’s own usage documentation, even images generated through the standard ChatGPT interface carry conditions that vary by subscription tier.

I’ve spent time across every major platform currently offering free access to this model, and the differences are sharper than most comparison articles admit. Resolution limits, watermarks, queue times, workflow friction, whether the output actually lands where you need it, these all vary more than the “free” label suggests.

This article covers the main legitimate access points for GPT Image 2, compares them honestly across six dimensions, and helps you pick the right one for your actual use case.

What Makes GPT Image 2 Different from Previous Models?

GPT Image 2 is OpenAI’s native image generation model, the direct successor to GPT Image 1. The two biggest practical upgrades over GPT Image 1 are reliable text rendering, especially for longer strings and non-Latin scripts, and tighter instruction-following through stronger reasoning. If you’ve ever watched an earlier AI model turn “SALE 50% OFF” into garbled nonsense, GPT Image 2 is the fix. These aren’t marginal improvements; they represent a fundamental shift in how the model processes and renders visual content.

Text Rendering Accuracy

Earlier models treated text in images as a visual texture rather than a string of characters. The results were consistently bad: misspelled words, fused letters, broken logos. GPT Image 2 handles this differently. According to OpenAI’s GPT Image 2 release documentation, the model produces legible, accurately spelled text across multiple languages, including non-Latin scripts. That’s a meaningful jump, not just a marginal improvement.

This matters most for creators building social cards, product banners, or app promo visuals. If you’re using AI-generated images inside a video project, readable on-screen text is non-negotiable. For a deeper look at how that fits into a full editing workflow, the guide to AI image generation for video editing covers the practical side.

Photorealism and Instruction-Following

The photorealism upgrade is harder to quantify, but you notice it quickly. Lighting behaves more consistently, surfaces have actual texture, and faces don’t drift into uncanny territory as often.

More importantly, complex prompts land closer to what you described. With GPT Image 1, a prompt like “a coffee mug on a marble counter, morning light from the left, condensation on the glass” often produced something loosely related — the coffee mug would land, but the lighting direction or the condensation detail might quietly get dropped because the model had no way to check its own output. GPT Image 2 follows the scene description with noticeably higher fidelity. I’ve seen multi-element compositions that would have taken three prompt iterations before now render correctly on the first try.

The model still struggles with complex multi-character scenes and hands. That’s worth knowing before you build a workflow around it.

Every Free GPT Image 2 Platform, Side by Side

Not all “free” access is equal. The five main platforms offering GPT Image 2 access differ across sign-up requirements, generation limits, output resolution, commercial use rights, and speed. According to OpenAI’s usage policies, even free-tier users retain ownership of generated images, but that policy only applies when you’re using OpenAI’s own products directly. Third-party wrappers operate under their own terms, which changes the picture considerably.

Here’s how the five main options stack up.

| Platform | Sign-up required | Generation limit | Output resolution | Commercial use | Speed | Best for |

|---|---|---|---|---|---|---|

| ChatGPT free tier | Yes | ~2-3 images/day | Standard (1024px) | Unclear for free users | Fast | Quick one-off generations |

| VisualGPT.io | No | Limited daily runs | Standard | Not stated | Moderate | No-account testing |

| EaseMate AI | No | Limited free tier | Capped or watermarked | Partial | Moderate | Watermark-tolerant drafts |

| Replicate | Yes | No free tier; trial credits only | Full | Yes (pay-per-run) | Fast | Developers and API users |

| ChatCut | Yes | Free to use; credit-based | Full | Yes | Fast | Video creators |

ChatGPT Free Tier

ChatGPT’s free plan gives you access to GPT Image 2, but the daily limit is tight, roughly 2-3 generations before you hit a wall. You’ll also need an OpenAI account. Commercial rights for free-tier outputs aren’t explicitly confirmed in OpenAI’s current ToS, so I’d treat them as personal-use only until that’s clarified.

VisualGPT.io

No account needed, which makes it the lowest-friction entry point for testing the model. Daily free runs are capped, and the platform doesn’t publish exact limits. Fine for experimenting; not reliable for production work.

EaseMate AI

Similar story: no sign-up, free tier available, but outputs come with a watermark or resolution restriction. Useful for mockups and drafts where final quality doesn’t matter yet.

Replicate API

Replicate isn’t really a free option. It offers trial credits when you first sign up, but after those run out, you pay per generation. It’s the right choice for developers who want programmatic access, not casual creators looking for free image generation.

ChatCut

ChatCut is the only platform here built specifically for video creators. GPT Image 2 is integrated directly into the editor: you describe the image you want, it generates in the media library, and you drag it straight onto your timeline as a B-roll clip, overlay, or thumbnail. No API key, no separate tool, no export-import loop. For creators building AI motion graphics or assembling video sequences, that workflow difference is significant.

The credit system does mean generations aren’t unlimited, but the free tier covers regular use without requiring a paid plan.

How to Use GPT Image 2 Free in 3 Steps (Inside ChatCut)

ChatCut integrates gpt-image-2 directly inside its browser-based editor, letting you generate images and place them on your video timeline without an OpenAI account, API key, or credit card. According to OpenAI’s product documentation, gpt-image-2 supports native image editing and generation without requiring a separate API setup. That matters here because it’s already wired in. Here’s how to go from zero to a generated image on your timeline in under two minutes.

Step 1: Open ChatCut and Start a Project

Go to chatcut.io and sign in. Open an existing project or create a new one. The editor loads in your browser, no download required. You’ll land on the main canvas with the AI chat panel on the left and your timeline at the bottom.

That’s your starting point. No setup, no API configuration.

Step 2: Type Your Image Prompt

In the AI chat panel, describe the image you want. Be specific about text, style, and composition. For example:

Generate a product shot of a coffee mug on a marble countertop with the text MORNING RITUAL in bold serif font.

gpt-image-2’s text rendering handles that kind of prompt accurately, including custom typography and brand copy. You can also specify aspect ratio, lighting mood, or color palette in the same prompt. If the first result isn’t quite right, refine it in the next message.

Step 3: Drag the Output Into Your Timeline

The generated image lands in your media library on the right panel. From there, drag it onto any video track in the timeline. Use it as a B-roll clip, a title card, a thumbnail, or an overlay. If you’re building out a full scene, ChatCut’s AI video generator lets you extend that workflow into motion.

Other editors make you generate images in a separate tool and import manually. ChatCut keeps the whole workflow in one place, so you stay in the edit without breaking context.

Prompting GPT Image 2 for Best Results

GPT Image 2 rewards specificity. According to OpenAI’s technical documentation, the model was built with native text rendering as a core capability, not an afterthought. That changes how you should structure prompts. Loose instructions that GPT Image 1 could still interpret into something usable leave too much room on GPT Image 2; because it verifies its own output against your prompt, the payoff for being specific is much bigger. It follows precise scene descriptions with noticeably higher fidelity across three common use cases: text-heavy images, product shots, and UI mockups.

Prompts for Text-Heavy Images

Specify font style, placement, and language explicitly. GPT Image 2 handles multilingual text accurately, but only if you tell it what you want. Leaving font style unspecified often produces generic results.

Weak prompt: "A poster with the text SUMMER SALE"

Strong prompt: "A vertical event poster, deep navy background, large bold sans-serif headline reading SUMMER SALE centered at the top, subtext '50% off all items' in light gray italic below, clean minimalist layout"

For non-English text, the same logic applies. Try: "A social card with the Japanese text '期間限定セール' in large red brushstroke-style font on a white background, with a thin black border"

Prompts for Photorealistic Product Shots

Structure your prompt around four variables: product, surface, lighting, and background. Skip any one of them and the model fills in the gap with its own assumptions, which are often generic.

Weak prompt: "A coffee mug on a table"

Strong prompt: "A ceramic coffee mug with a matte black finish on a white marble countertop, soft natural window light from the left, shallow depth of field, clean white background, commercial product photography style"

I’ve found that adding a photography style reference (“editorial”, “e-commerce white background”, “lifestyle flat lay”) consistently improves output quality. It gives the model a compositional target, not just a subject.

Prompts for UI and App Screenshots

Useful for app promo videos, these prompts need three things: device frame, screen content, and brand colors. Without the device frame, you get a floating interface. Without brand colors, you get something generic.

Weak prompt: "A mobile app screenshot showing a fitness tracker"

Strong prompt: "An iPhone 15 Pro mockup, screen showing a fitness tracking app dashboard, dark mode, teal accent color, displaying step count 8,432 and a circular progress ring, clean sans-serif UI"

GPT Image 2 still struggles with complex multi-character scenes and hands specifically. Fingers get merged or duplicated in ways that are hard to fix with prompt edits alone. For those use cases, plan for manual cleanup or avoid close-up hand shots entirely.

Is There a 100% Free AI Image Generator Without Limits?

No. Every free AI image generator imposes at least one constraint: rate limits, watermarks, resolution caps, or credit systems. There’s no such thing as unlimited free generation, and any platform claiming otherwise is worth reading twice before you trust it with commercial work. According to OpenAI’s own usage documentation, even ChatGPT’s free plan caps image generation daily and reserves higher throughput for paid subscribers.

The honest reality is that free tiers exist as freemium funnels. Third-party platforms follow the same model: give users enough to see the value, then convert them.

That said, some free tiers are more generous than others.

Here’s how the options stack up by practical generosity:

- ChatCut - Integrated GPT Image 2 access inside the video editor, no API key required, no watermark on outputs. Best for video creators who don’t want to break their workflow.

- EaseMate AI - Reasonable daily free runs, though resolution is capped on the free tier.

- VisualGPT.io - Free access without sign-up, but generation limits kick in quickly.

- ChatGPT free tier - Works well for occasional use; daily limits apply and commercial rights need verification.

- Replicate - Trial credits only. It’s a pay-per-run API, not a true free tier.

For casual or occasional use, ChatCut, VisualGPT, or EaseMate cover most needs without spending anything. ChatCut also includes AI-generated royalty-free music for creators who need a complete toolkit without paying for separate tools.

For high-volume commercial production, you’ll hit ceilings fast. A paid plan isn’t optional at that scale.

One naming issue worth clearing up: “GPT-2” and “GPT Image 2” are unrelated. GPT-2 is a 2019 text generation model from OpenAI, not an image tool. If you landed here looking for GPT-2 pricing, it’s open-source and free to run locally. GPT Image 2 is the 2026 image model covered throughout this article.

If workflow efficiency matters, pairing image generation with AI video editing templates and guided workflows cuts the time between idea and finished video significantly.

Commercial Use Rights: What the Free Tiers Actually Allow

Commercial use rights vary significantly across free-tier platforms, and most users never check before publishing AI-generated images in client work or product packaging. According to OpenAI’s usage policies, images generated through ChatGPT, including the free tier, are generally owned by the user and can be used commercially. OpenAI states that users retain rights to their outputs, subject to the platform’s content guidelines. That’s a strong baseline, but “generally” is doing a lot of work in that sentence. Edge cases exist, and terms change. Verify against the current ToS before using outputs in paid deliverables.

Third-party wrappers are where it gets murky.

VisualGPT.io and EaseMate AI both call the same underlying model, but their own terms of service govern what you can do with the output. Some platforms restrict commercial use on free tiers or require attribution. I’ve seen wrappers quietly add clauses that prohibit resale or client work. Read the fine print before you deliver anything to a paying client.

Replicate’s situation is simpler. Since it’s direct API access to OpenAI’s model, the output rights align closely with OpenAI’s own license. Still, Replicate’s platform terms apply on top, so a quick review is worth two minutes of your time.

ChatCut’s position is clean: generated images are owned by the user, and there’s no watermark applied to AI-generated assets, including those produced through the GPT-image-2 integration. What you generate is yours to use. For a broader look at how ChatCut’s free tier stacks up against alternatives, the ChatCut vs. CapCut comparison breaks down the differences in detail.

The short version: OpenAI’s own products give you the clearest commercial rights. Third-party platforms add a layer of uncertainty. When in doubt, read the ToS before you publish.

FAQs: Free GPT Image 2 Access

How do I use GPT Image 2?

Pick a platform that offers access, write a descriptive prompt, and generate. For the fastest video workflow, the how-to section above walks through the full process inside ChatCut in three steps, no API key required.

Is there a free ChatGPT for images?

Yes. ChatGPT’s free tier includes GPT Image 2 access, but OpenAI caps daily generations for free accounts. If you hit the limit, you’ll need to wait until the next day or upgrade to ChatGPT Plus. For a broader overview of free and low-cost options, the roundup of the best AI video editors covers tools that pair well with AI image generation.

Is GPT-2 still good?

GPT-2 and GPT Image 2 aren’t the same thing. GPT-2 is a 2019 text generation model from OpenAI; GPT Image 2 is a 2026 image generation model. They’re completely unrelated. GPT Image 2 is currently one of the strongest AI image generators available, particularly for text rendering accuracy.

Which AI image generator is 100% free?

None of them, honestly. Every platform imposes some constraint: daily caps, watermarks, resolution limits, or credit systems. The most generous free tiers for casual use are ChatGPT, EaseMate, and ChatCut, as covered in the comparison above. For high-volume work, a paid plan becomes necessary.

Can I use GPT Image 2 for video projects?

You can, and it fits naturally into video workflows. If you’re already using an AI editor that handles captions and subtitles, check out automatic video captions with an AI caption generator to keep your entire post-production process in one place. Social creators building content for platforms like Instagram or TikTok can also explore AI tools built specifically for social media content to streamline the full publishing workflow.

Pick the Right Free Access Point for Your Workflow

GPT Image 2 is genuinely free to access, but “free” isn’t the same on every platform. For one-off image generation, ChatGPT’s free tier or EaseMate gets the job done. For video creators, though, neither of those keeps you in your editing workflow.

That’s where ChatCut is different. It’s the only option that puts GPT Image 2 inside the editor itself, so the image you generate lands directly on your timeline. No downloading, no re-importing, no switching tabs. Small friction points like that export-import loop are exactly what kills creative momentum mid-project.

According to OpenAI’s usage policies, images generated through their models can be used commercially, which means the assets you create aren’t locked to personal projects.

Describe what you want in plain English. ChatCut handles the rest.

Try it free at chatcut.io, no API key required and no credit card needed. Whether you’re building a product promo, a talking head video, or a social clip, you’ll have AI-generated visuals ready before you’d finish hunting through a stock library.